Why Everyone in Asia Is Suddenly Talking About OpenClaw

OpenClaw, the self‑hosted AI agent, is exploding across Asia. Learn what it is, why it matters, and how to deploy it safely under tightening regulations.

If ChatGPT was the moment AI started talking, OpenClaw is the moment it grew hands — and the wider AI community is watching very closely.

Across Hong Kong, Shenzhen, Singapore and Jakarta, early adopters are wiring OpenClaw, an open‑source, self‑hosted AI agent, into WhatsApp, WeCom, DingTalk and Slack to clear inboxes, negotiate with vendors, and draft legal letters while they sleep.

That excitement comes with real anxiety.

OpenClaw runs on your own machine, with shell access, browser control, and permission to touch live data — which is exactly what China’s cybersecurity agency has now warned could turn into a major security and data‑leak problem if deployed carelessly.

By the end of this post, you’ll know what OpenClaw actually is, why it has exploded across Asia in particular, and the concrete risk controls you need before you let “Claude with hands” anywhere near your production systems or government data.

What OpenClaw Actually Is (Beyond the Hype)

OpenClaw (formerly Clawdbot/Moltbot) is an open‑source AI agent that runs on your own hardware and talks to you through the messaging apps you already use.

Instead of being a hosted chatbot like ChatGPT, it acts as a local‑first automation gateway: it can run shell commands, control a browser, read and write files, manage calendars, and send emails — all triggered from Telegram, WhatsApp, Slack, Signal, WeChat and more.

“Claude With Hands” in One Sentence

Most users describe OpenClaw as “Claude with hands” because it:

- Connects to external LLMs (Claude, OpenAI, DeepSeek, Gemini, and local models via Ollama), while keeping orchestration and memory on your machine.

- Stores long‑term memory and skills as Markdown/YAML under your workspace and

~/.openclaw, making behaviour auditable and version‑controllable. - Runs as a 24/7 service with a scheduler (cron‑style “autopilot tasks”) so agents can work in the background without you prompting them.

Why Everyone Can’t Stop Talking About OpenClaw

1. Local‑First Design Fits Asia’s Regulatory Reality

Across many jurisdictions, regulators are tightening rules around data residency, AI safety and cross‑border data flows.

Because OpenClaw runs on your own servers or VPCs, with state and memory stored locally, it fits organisations that can’t simply push sensitive workflows into US‑hosted SaaS AI.

At the same time, teams everywhere are under pressure to “do more with less”, whether in the public sector, financial services or e-commerce.

An always‑on, chat‑native agent that lives inside WeCom, DingTalk, WhatsApp or Line and quietly orchestrates work across tools directly addresses that productivity gap.

2. Viral Growth and “Claw Culture”

OpenClaw’s GitHub repo has gathered well over 100k stars in a short time, with thousands of forks and an active skill marketplace (ClawHub) featuring more than 3,000 skills and hundreds of community contributors.

Developers share stories that feel like sci‑fi use cases: one OpenClaw agent negotiated about US$4,000 off a car purchase via email; another found a rejected insurance claim in a user’s inbox, drafted a rebuttal citing policy language, and sent it autonomously — successfully getting the case reopened.

In China, OpenClaw has gone mainstream enough to earn the nickname “longxia” (lobster), with city like Shenzhen launching subsidy packages to attract OpenClaw‑based startups as part of a new “open‑source AI agent” track.

The Dark Side: Why Regulators Are nervous About OpenClaw

The same capabilities that thrill developers have caught the attention of cybersecurity agencies.

Recent advisories have warned of “serious risks” from improper OpenClaw installation and use as adoption accelerates.

The Specific Risks Regulators Are Worried About

- High‑privilege autonomy

OpenClaw often runs with shell, browser, file system and API access, so any compromise or misconfiguration gives an attacker the same reach you’ve granted the agent. - Prompt injection and data exfiltration

Malicious webpages or documents can hide instructions which, if read by OpenClaw, may trick the agent into leaking system keys or sensitive data. - Misoperations and data loss

There is a real risk that OpenClaw could misunderstand user intent, deleting critical emails or production data as it “helpfully” executes commands. - Malicious plugins (“skills”)

Community skills move fast, and some have been found to steal keys, deploy backdoors or join devices into botnets once installed.

Security teams now advise isolating OpenClaw with containers or VMs, avoiding exposure of default management ports to the public internet, hardening credential storage, and disabling auto‑updates for unverified skills.

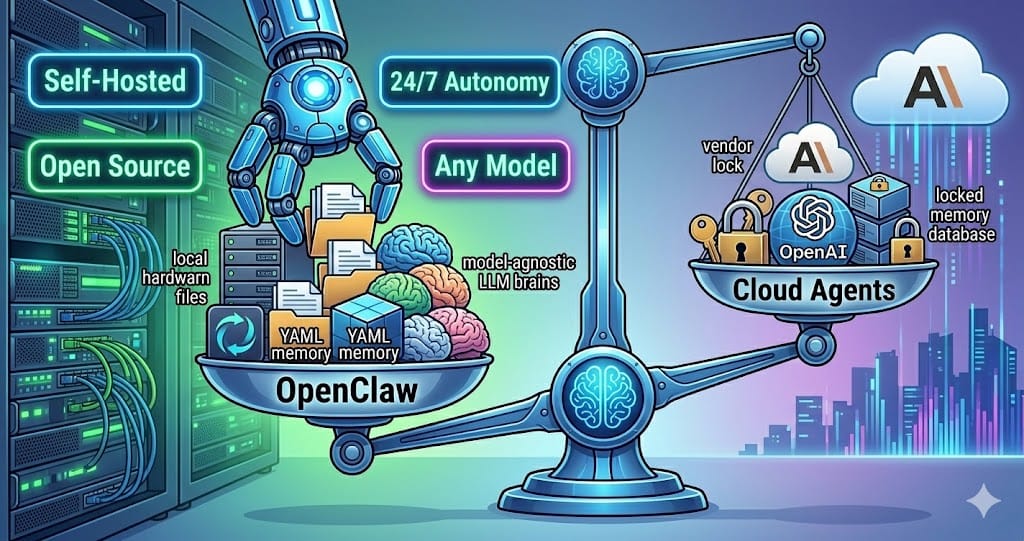

How OpenClaw Compares to Cloud AI Agents

For CIOs and digital leaders, the question isn’t “Is this cool?” — it’s “Where does this sit next to Claude, ChatGPT Agents or local AI stacks?”

| Feature | OpenClaw | Claude / ChatGPT Agents |

|---|---|---|

| Hosting | Self‑hosted, your hardware/VPC | Vendor cloud (Anthropic / OpenAI) |

| Source | Open‑source MIT gateway | Closed source |

| Interface | Messaging apps, CLI, APIs | Web UI, APIs, IDE integrations |

| Memory | Local Markdown / YAML files | Stored in provider accounts |

| Autonomy | 24/7 daemon, cron‑like scheduler | Mostly event / request driven |

| Model choice | Any OpenAI‑compatible or local models | Tied to provider ecosystem |

For teams trying to balance sovereignty, cost and capability, that mix is unusually attractive — but only if you treat OpenClaw as high‑privilege infrastructure, not a weekend toy.

Safe Adoption Playbook for Real-World Teams

1. Isolate First, Experiment Later

- Run OpenClaw on a dedicated VM, container or separate machine, not on your primary laptop.

- Avoid exposing default ports to the public internet; front it with VPNs or zero‑trust access and strict firewall rules.

2. Treat Skills as Untrusted Code

Skill ecosystems like ClawHub move fast and include vulnerable or outright malicious packages.

- Fork third‑party skills into your own repo.

- Review code end‑to‑end before enabling in production.

- Disable automatic skill updates and only install from curated sources.

3. Put Humans in the Loop for Irreversible Actions

My @clawdbot accidentally started a fight with Lemonade Insurance because of a wrong interpretation of my response.

— Nikita 🤙 (@Hormold) January 13, 2026

Lemonade declined a claim regarding my best friend, and I was really sad about it. The agent discovered the rejection email and offered me a draft email, but I… pic.twitter.com/O6edlBHE6Z

The viral “accidental insurance fight” story — where an agent autonomously drafted and sent a legal rebuttal — shows why approvals matter.

- Require human approval for payments, deletions, system changes, and external communications that carry legal or financial impact.

- Use OpenClaw’s tool policies or wrapper scripts to enforce those checks by design.

4. Control Token Spend and Model Mix

OpenClaw is model‑agnostic, but large context windows plus frequent heartbeats can quietly drive high API bills with frontier models.

- Use lighter models (for example DeepSeek or Claude Haiku) for routine monitoring, reserving premium models for complex reasoning.

- Set alerts and hard spending caps with your LLM providers before rolling out to multiple teams.

China has issued guidelines on the best practices and prohibitions for adopting and using OpenClaw, which can be summarised: use the official latest version, minimise internet exposure, grant only the minimum permissions necessary, exercise caution when using the skill market filled with third-party offerings, guard against browser hijacking, and regularly check for patch vulnerabilities.

Final Takeaway and CTA

Everyone is talking about OpenClaw because it marks a real shift: from chatbots that answer, to self‑hosted AI agents that act across the same messaging platforms your teams already live in.

In a world of rising regulatory scrutiny and relentless productivity pressure, that’s a powerful opportunity — as long as you deploy OpenClaw with the same discipline you apply to any high‑privilege system.

If you’re planning a pilot, your next step is clear: design a small, isolated OpenClaw sandbox with strict security controls, curated skills and monitored spend — then map where always‑on agents can deliver compounding value in your organisation.